Best Paper Nomination at “AI in Healthcare 2025”

The paper compared three modern uncertainty methods for Parkinson’s disease screening and show that uncertainty-aware models can flag risky predictions instead of guessing with confidence.

Why uncertainty matters for AI in Parkinson’s care

Parkinson’s disease is usually assessed through symptom scores and expert exams, which can vary across visits and clinicians. New AI tools use remote video and audio data from simple tasks at home, such as finger tapping or reading a short passage, to screen for Parkinson’s. That is promising, but there is a missing piece:

- Most models output a single probability and act as if they always “know”.

- Clinicians, however, need to see when the model might be wrong, so they can review the case or order more tests.

Our work brings uncertainty quantification into this setting so models do not just predict what they think, but also how sure they are.

What we actually built and tested

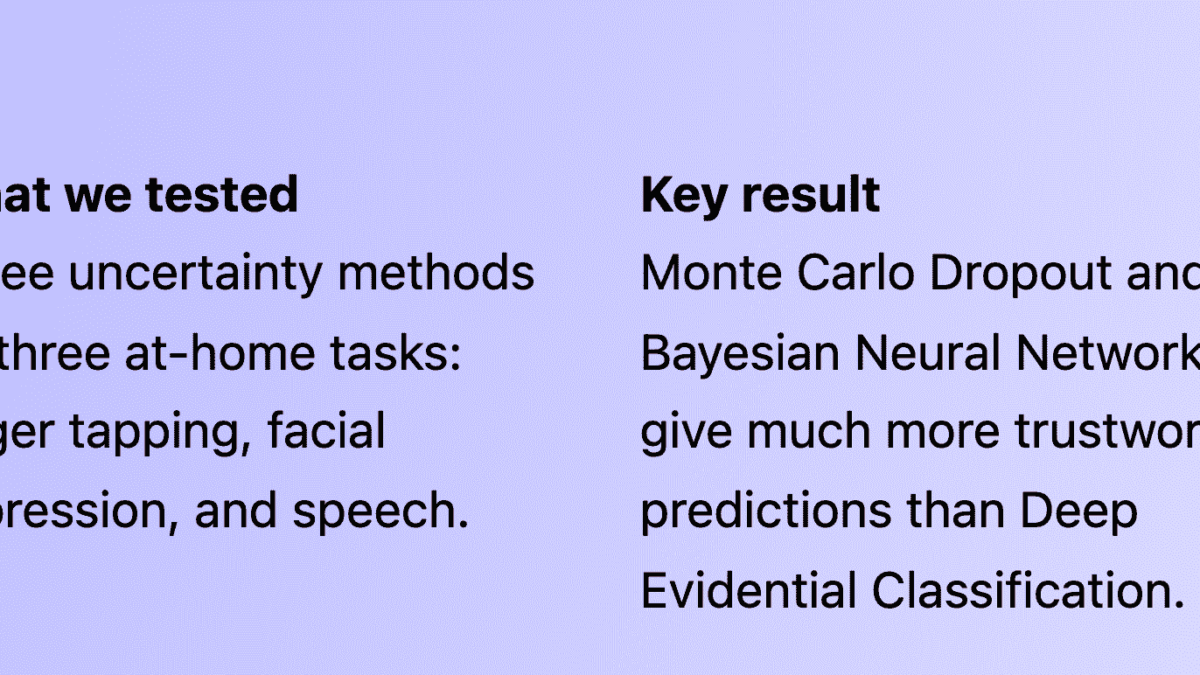

Using publicly shared feature sets from prior work on remote Parkinson’s assessment, we trained three families of models:

- MC Dropout — standard neural network with dropout kept active at test time to sample many predictions.

- Bayesian Neural Networks (BNN) — networks with distributions over weights instead of fixed values.

- Deep Evidential Classification (DEC) — a single-pass method that predicts class probabilities and uncertainty using a Dirichlet prior.

We evaluated them on three tasks:

- Finger tapping from video features, linked to bradykinesia severity.

- Smile / neutral cycles capturing reduced facial expression in PD.

- Read-aloud speech using pre-trained WavLM embeddings for voice changes related to PD.

Headline findings in plain language

- DEC looked good on paper, but failed in practice.

Across all three tasks, DEC had clearly lower accuracy and much worse uncertainty calibration. It was often very confident on wrong predictions. - MC Dropout and BNNs were both strong and dependable.

For finger tapping, smile, and speech, these models reached higher accuracy and better AUROC / AUPR values, and they produced more sensible uncertainty scores. - Uncertainty scores helped filter out risky cases.

By discarding only the most uncertain 20 percent of samples, overall risk on the remaining 80 percent dropped noticeably, showing that the models can highlight cases that deserve human review. - Better uncertainty tracked better clinical performance.

Models that classified PD more accurately also tended to have lower Expected Calibration Error and better risk-coverage curves, which makes them more suitable as decision support tools.

What this means for clinicians and tool builders

- Do not trust raw softmax probabilities as “confidence” in clinical AI.

- Start with MC Dropout or Bayesian Neural Networks when designing PD screening tools.

- Use uncertainty to route patients: high-confidence predictions can support routine decisions, while uncertain ones can trigger manual review or in-person exams.

- Design dashboards that show both predicted label and an easy-to-read uncertainty score or flag.

Short term, this can reduce harmful overconfident errors. Long term, it can help health systems accept AI systems as partners instead of black boxes.

Try the code and build on this work

We have released code and hyperparameters so others can reproduce and extend our experiments on new datasets or tasks.

Example extensions include adding more tasks, trying transformer models for time series, or exploring new metrics that better reflect clinical decision making.