Can Randomness Fix Social Media?

Algorithms shape what we see and what we believe. Our latest work shows how small changes in recommendation algorithms can help restore diversity and openness.

Open any social media app and you’ll see it. Like one post about politics, health, or fitness, and your feed quickly fills with more of the same perspective. Different viewpoints fade away. It feels validating, even reassuring, but it also quietly shapes what you believe.

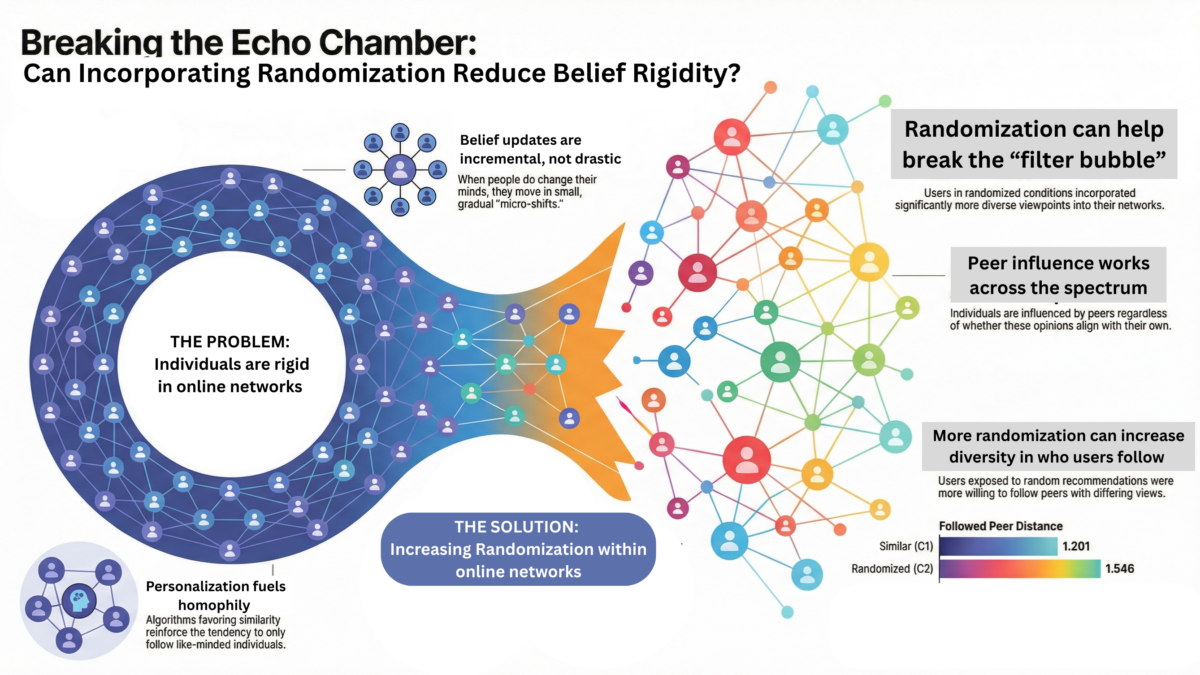

This is not accidental. To improve engagement, platforms rely on highly personalized recommendation algorithms. Over time, personalization can reinforce homophily, create echo chambers, and contribute to polarization. When people mostly interact with like-minded views, beliefs become more rigid, not because they are better reasoned, but because they are rarely challenged.

Imagine walking into a bookstore where every book that questions your worldview has been removed. You would leave convinced that no other opinions exist and that you are obviously right, unaware of what you never had the chance to see. This is effectively what happens when online platforms become overly curated.

In our paper, “Exploring the Role of Randomization on Belief Rigidity in Online Social Networks,” recently accepted in IEEE Transactions on Affective Computing, we examine whether there is a way to counter this phenomenon. We show that a simple design change can help. Instead of eliminating personalization, we introduce randomization into recommendation algorithms to increase the variety of content users see, while still allowing users some agency over their feeds.

Across a series of experiments, we find that what people see online does influence their beliefs, often pulling them closer to the views they are repeatedly exposed to. When algorithms incorporate more randomization, this feedback loop weakens. Users are exposed to a broader range of perspectives and become more open to differing views.

This matters because polarization is no longer just an online phenomenon. It affects public trust, public health, and democratic processes. Our findings suggest that modest algorithmic changes, which platforms could test today, may help reduce belief rigidity while preserving relevance.

For researchers, this opens new directions for studying algorithmic influence on belief formation. For platforms and policymakers, it offers concrete intervention strategies worth exploring. And for users, the message is simple. If your feed feels too comfortable, that might be by design. Seek out voices that challenge you. The most dangerous feeds are not the ones that upset us, but the ones that convince us we are always right.

Press release by UR: https://www.rochester.edu/newscenter/echo-chambers-meaning-social-media-politics-693662/

You can read the paper at: https://ieeexplore.ieee.org/document/11320342